Saturday 15 February, 2014, 14:01 - Spectrum Management

Posted by Administrator

When Goldilocks visited the house of the three bears, she tried their porridge and found one bowl too hot, one too salty but the third one just right. It seems that the ITU may have employed Goldilocks to help them put together their forecasts for mobile spectrum demand. Why? Read on...Posted by Administrator

Leafing through the various responses to Ofcom's mobile data strategy consultation, one particular response raised more than an eyebrow. The response from the European Satellite Operators' Association (ESOA) points out that the model used by Ofcom to calculate the demand for spectrum for IMT (mobile broadband) services has a great big, whoppingly large, error in it. The model is based on that of the ITU which and is snappily titled 'ITU-R M.1768-1'. It was originally used at the 2007 World Radiocommunication Conference (WRC-07) to set expectations on how much spectrum would be required for mobile broadband services up to, and including, the year 2020.

Leafing through the various responses to Ofcom's mobile data strategy consultation, one particular response raised more than an eyebrow. The response from the European Satellite Operators' Association (ESOA) points out that the model used by Ofcom to calculate the demand for spectrum for IMT (mobile broadband) services has a great big, whoppingly large, error in it. The model is based on that of the ITU which and is snappily titled 'ITU-R M.1768-1'. It was originally used at the 2007 World Radiocommunication Conference (WRC-07) to set expectations on how much spectrum would be required for mobile broadband services up to, and including, the year 2020.At the time (2007), the ITU model predicted that by 2010, between 760 and 840 MHz of spectrum would be needed for IMT services. In reality, in most countries, little more than 400 MHz was actually available. And yet, ironically, the amount of data traffic being carried was far in excess of that which the ITU predicted. Not deterred by this apparent flaw in their logic, the model has been updated this year and a new set of results published. These new results show a demand for spectrum by 2020 of between 1340 and 1960 MHz.

What ESOA have spotted, is that if you apply the traffic densities which consultancy RealWireless have assumed in their work for Ofcom, or those developed by the ITU, the resulting total traffic for the UK would be orders of magnitude greater than the actual traffic forecasts. Figures 40 and 44 of their report clearly repeat these errors. The ESOA consultation response illustrates it quite nicely, as follows:

What ESOA have spotted, is that if you apply the traffic densities which consultancy RealWireless have assumed in their work for Ofcom, or those developed by the ITU, the resulting total traffic for the UK would be orders of magnitude greater than the actual traffic forecasts. Figures 40 and 44 of their report clearly repeat these errors. The ESOA consultation response illustrates it quite nicely, as follows:| Type | Area (sqkm) | Traffic Density (PB/month/sqkm) | Traffic (PB/month) |

|---|---|---|---|

| Urban | 210 | 30 - 100 | 6300 - 21000 |

| Suburban | 4190 | 10 - 20 | 41900 - 83800 |

| Rural | 238600 | 0.03 - 0.3 | 7160 - 71600 |

| Total | 234000 | 55360 - 176400 |

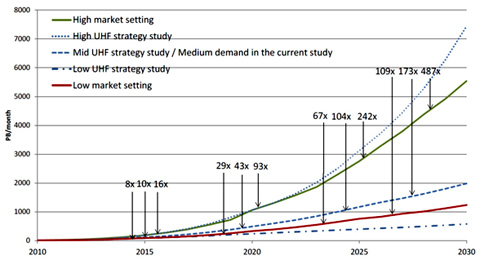

What this shows it that the total monthly traffic for the UK, as calculated from the RealWireless traffic assumptions is between 55,360 and 176,400 PB (Peta Bytes) per month. Compare this to their traffic forecasts which show the total UK traffic reaching around 1000 PB/month by 2020 even in the 'high market setting' in the chart below.

Source: Figure 40 of 'Study on the future UK spectrum demand for terrestrial mobile broadband applications', 23 June 2013

So if the ITU and Ofcom models assume traffic levels of 100 times greater than reality, why is the resulting demand for spectrum which it outputs in line with many other industry predictions? Without digging deeply into the model (which is immensely complex), it's difficult to say, but it stands to reason that there must be some assumptions that have been 'adjusted' to make the results seem believable - fiddle factors as they're normally called.

This is where Goldilocks comes in:

This is where Goldilocks comes in:- If the ITU model produced a result which said that 20,000 MHz of spectrum was needed for mobile broadband by 2020 (which it ought to given the high data traffic it is trying to model), no one would believe it - too hot!

- If it had said that 200 MHz of spectrum was needed it would equally have not been believed - too salty!

- But as it produces a result around 2000 MHz it is seen as just right!

Indeed the ITU themselves seem to recognise that there is some fiddling going on in a note they provide hidden deep in the annexes in their paper entitled ITU-R M.2290-2014 which says:

The spectrum efficiency values ... are to be used only for spectrum requirement estimation by Recommendation ITU-R M.1768. These values are based on a full buffer traffic model... They are combined with the values of many other parameters ... to develop spectrum requirement estimate for IMT. In practice, such spectrum efficiency values are unlikely to be achieved.

What a 'full buffer traffic model' is, is anyone's guess but it seems to suggest that this factor, and others, may not be values that mobile operators or anyone else for that matter, would recognise. The problem with any complex model of this type is that it is difficult to understand except by the academic elite that prepared it (or by Charlie Eppes on Numb3rs), and equally difficult to sense-check. It seems that the sense checking has not taken place and what is left succumbs to the old computing law, "garbage in = garbage out". Many countries have done their own calculations and in many cases these have shown smaller figures than those espoused by the ITU, some have shown higher figures. Where such figures are based on the ITU model itself, they should, of course, now be taken with a very large pinch of salt.

What a 'full buffer traffic model' is, is anyone's guess but it seems to suggest that this factor, and others, may not be values that mobile operators or anyone else for that matter, would recognise. The problem with any complex model of this type is that it is difficult to understand except by the academic elite that prepared it (or by Charlie Eppes on Numb3rs), and equally difficult to sense-check. It seems that the sense checking has not taken place and what is left succumbs to the old computing law, "garbage in = garbage out". Many countries have done their own calculations and in many cases these have shown smaller figures than those espoused by the ITU, some have shown higher figures. Where such figures are based on the ITU model itself, they should, of course, now be taken with a very large pinch of salt. To take a real life example, Verizon Wireless in the USA claimed in June 2013 that:

To take a real life example, Verizon Wireless in the USA claimed in June 2013 that:57 percent of Verizon Wireless� data is carried on its 4G LTE.

Putting this in perspective, Verizon has around 40% of the US market and is using just 20 MHz of spectrum for its LTE network. This means that with an LTE network using 5 times as much spectrum (i.e. 100 MHz), it ought to be possible to carry the whole of the US's mobile data traffic today. Allowing for growth in data traffic of 33% per year (a figure which both Vodafone and Telefonica have cited as their actual data growth in 2013), and by 2020 the US would need a total of 740 MHz of spectrum for mobile data, a far cry from the 1960 MHz being demanded by the ITU. And the 740 MHz figure does not take into account any additional savings that might be realised using more efficient technology such as LTE-Advanced.

The inexorable growth in demand for mobile data is not in question, though at some point it will become too expensive to deliver the 'all you can eat' packages that people expect. Who is going to pay US$10 to download one film on their mobile when the subscription to Netflix for the whole month is less than that, and they could use their home WiFi and do it for next to nothing? What is now in question is how much spectrum you need to deliver it. Maybe a good starting point would be to stop where we are and wait a few years for things to settle down, and then see what the real story is. Maybe Goldilocks will have run into the forest to hide from the ITU, and a new bowl of porridge will have been made that tastes a whole lot better.

add comment

( 1728 views )

| permalink

|

( 2.8 / 1144 )

( 2.8 / 1144 )

( 2.8 / 1144 )

( 2.8 / 1144 )

The BBC recently reported that Radio Russia have quietly switched off the majority of their long-wave broadcast transmitters. Whilst the silent passing of Russia's long wave service will not rattle the front pages either in Russia or anywhere else for that matter, it does raise the question of the long-term viability of long-wave broadcasting.

During the early 1990s there was a resurgence of interest in long-wave radio in the UK caused by the success of the pop station Atlantic 252. But by later in the decade, the poorer quality of long-wave broadcasting compared to FM, together with the increased proliferation of local FM services in the UK eventually led to the demise of the station. Similar logic appears to have been used by the Russian authorities who now have a much more extensive network of FM transmitters and clearly feel that the expense of operating long-wave is no longer justified.

During the early 1990s there was a resurgence of interest in long-wave radio in the UK caused by the success of the pop station Atlantic 252. But by later in the decade, the poorer quality of long-wave broadcasting compared to FM, together with the increased proliferation of local FM services in the UK eventually led to the demise of the station. Similar logic appears to have been used by the Russian authorities who now have a much more extensive network of FM transmitters and clearly feel that the expense of operating long-wave is no longer justified.

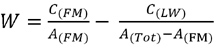

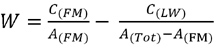

One of the great advantages of long-wave broadcasting is the large area that can be covered from a single transmitter. For countries whose population is spread over very wide areas, long-wave offers a means to broadcast to them with very few transmitters. Conversely, the large antennas and high transmitter powers required to deliver the service make it an expensive way to reach audiences. Presumably there is a relatively simple equation that describes the cost-benefit of long-wave broadcasting, i.e.:

Where:

As long as W>0 as A(FM) increases it continues to be worthwhile to broadcast on long-wave as the cost of providing the service is greater than the cost of doing the same thing using FM.

The cost of providing an FM service - C(FM) - is not constant, and will increase with the audience served, and not in a linear fashion either. The final few audience will cost significantly more than the first few. This is because stations which only serve small, sparse communities tend to be more costly (per person) than ones serving densely packed areas.

The cost of providing the LW service - C(LW) - however, is largely constant regardless of how many people listen to it.

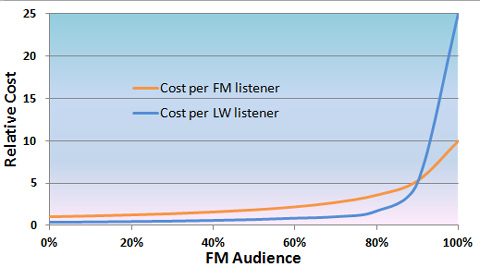

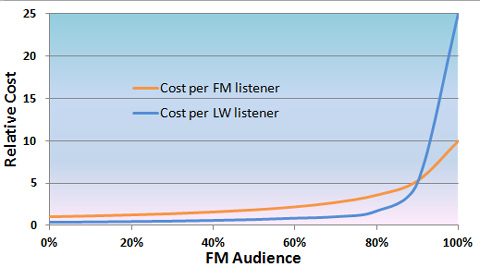

It's therefore possible to draw a graph of the cost per person - C/A - of the FM audience and the cost per person of the long-wave audience, as the FM audience increases.

The figures used in the graph above are illustrative only. They assume that:

Of course there are many other factors to take into account, in particular the difference in service quality between FM and long-wave, and the proportion of receivers that have a long-wave function. There are thus other factors that will hasten the end of long-wave as FM coverage increases. The same could largely be said for medium-wave where arguably, the problems of night time interference make it even worse off than long-wave (though more receivers have it).

Wireless Waffle reported back in 2006 on the various organisations planning to launch long-wave services, not surprisingly none of them have (yet) come to fruition.

Wireless Waffle reported back in 2006 on the various organisations planning to launch long-wave services, not surprisingly none of them have (yet) come to fruition.

There is, however, one factor in favour of any country maintaining a long-wave service (or even medium-wave for that matter), and it's this: simplicity. It is possible to build a receiver for long-wave (or medium-wave) AM transmissions using nothing more than wire and coal (and a pair of headphones) as was created by prisoners of war.

In the event (God forbid) of a national emergency that took electricity (such as a massive solar flare), it would still be possible for governments to communicate with their citizens using simple broadcasting techniques and for citizens to receive them using simple equipment. Not so with digital broadcasting! Ironically, most long-wave transmitters use valves which are much less prone to damage from solar flares than transistors.

So whilst long-wave services are on the way out in Russia and elsewhere, it will be interesting to see whether the transmitting equipment is completely dismantled at all sites, or whether some remain for times of emergency. Of course if every long-wave transmitter is eventually turned off, there is some interesting radio spectrum available that could be re-used for something else... offers on a postcard!

During the early 1990s there was a resurgence of interest in long-wave radio in the UK caused by the success of the pop station Atlantic 252. But by later in the decade, the poorer quality of long-wave broadcasting compared to FM, together with the increased proliferation of local FM services in the UK eventually led to the demise of the station. Similar logic appears to have been used by the Russian authorities who now have a much more extensive network of FM transmitters and clearly feel that the expense of operating long-wave is no longer justified.

During the early 1990s there was a resurgence of interest in long-wave radio in the UK caused by the success of the pop station Atlantic 252. But by later in the decade, the poorer quality of long-wave broadcasting compared to FM, together with the increased proliferation of local FM services in the UK eventually led to the demise of the station. Similar logic appears to have been used by the Russian authorities who now have a much more extensive network of FM transmitters and clearly feel that the expense of operating long-wave is no longer justified.One of the great advantages of long-wave broadcasting is the large area that can be covered from a single transmitter. For countries whose population is spread over very wide areas, long-wave offers a means to broadcast to them with very few transmitters. Conversely, the large antennas and high transmitter powers required to deliver the service make it an expensive way to reach audiences. Presumably there is a relatively simple equation that describes the cost-benefit of long-wave broadcasting, i.e.:

Where:

W = Worthwhileness of Long-Wave Broadcasting

C = Total cost of providing the service

A = Audience

FM = FM

LW = Long Wave

Tot = Total

C = Total cost of providing the service

A = Audience

FM = FM

LW = Long Wave

Tot = Total

As long as W>0 as A(FM) increases it continues to be worthwhile to broadcast on long-wave as the cost of providing the service is greater than the cost of doing the same thing using FM.

The cost of providing an FM service - C(FM) - is not constant, and will increase with the audience served, and not in a linear fashion either. The final few audience will cost significantly more than the first few. This is because stations which only serve small, sparse communities tend to be more costly (per person) than ones serving densely packed areas.

The cost of providing the LW service - C(LW) - however, is largely constant regardless of how many people listen to it.

It's therefore possible to draw a graph of the cost per person - C/A - of the FM audience and the cost per person of the long-wave audience, as the FM audience increases.

The figures used in the graph above are illustrative only. They assume that:

- The cost per person of providing an FM service increases by a factor of 10 between the first and the last person served;

- The cost per person of providing the long-wave service is initially only a third of that of providing the same service on FM.

Of course there are many other factors to take into account, in particular the difference in service quality between FM and long-wave, and the proportion of receivers that have a long-wave function. There are thus other factors that will hasten the end of long-wave as FM coverage increases. The same could largely be said for medium-wave where arguably, the problems of night time interference make it even worse off than long-wave (though more receivers have it).

Wireless Waffle reported back in 2006 on the various organisations planning to launch long-wave services, not surprisingly none of them have (yet) come to fruition.

Wireless Waffle reported back in 2006 on the various organisations planning to launch long-wave services, not surprisingly none of them have (yet) come to fruition. There is, however, one factor in favour of any country maintaining a long-wave service (or even medium-wave for that matter), and it's this: simplicity. It is possible to build a receiver for long-wave (or medium-wave) AM transmissions using nothing more than wire and coal (and a pair of headphones) as was created by prisoners of war.

Prisoners of war during WWII had to improvise from whatever bits of junk they could scrounge in order to build a radio. One type of detector used a small piece of coke, which was a derivative of coal often used in heating stoves, about the size of a pea.

After much adjusting of the point of contact on the coke and the tension of the wire, some strong stations would have been received.

If the POW was lucky enough to scrounge a variable capacitor, the set could possibly receive more frequencies.

Source: www.bizzarelabs.com

In the event (God forbid) of a national emergency that took electricity (such as a massive solar flare), it would still be possible for governments to communicate with their citizens using simple broadcasting techniques and for citizens to receive them using simple equipment. Not so with digital broadcasting! Ironically, most long-wave transmitters use valves which are much less prone to damage from solar flares than transistors.

So whilst long-wave services are on the way out in Russia and elsewhere, it will be interesting to see whether the transmitting equipment is completely dismantled at all sites, or whether some remain for times of emergency. Of course if every long-wave transmitter is eventually turned off, there is some interesting radio spectrum available that could be re-used for something else... offers on a postcard!

It wasn't that long ago that Wireless Waffle was discussing the need for spectrum for programme making and special events (PMSE). At the time we were considering how the needs of the burgeoning demand for radio spectrum for the Eurovision Song Contest would be met. Most radiomicrophones currently operate in the UHF television band, in the gaps between transmitters. These gaps (which are there to protect neighbouring television transmitters from interfering with each other) are also being eyed by the wireless broadband and machine-to-machine community amongst others and have been given the moniker 'white space' (though one person infinitely more learned in these things believes they should correctly be considered 'grey spaces' as they aren't as white as you might believe).

There are also moves afoot to squash television broadcasting into even less spectrum to make way for more mobile broadband. At present the spectrum from 470 to 790 MHz is generally available (40 channels - channels 21 to 60). The new plans involve using the spectrum from 694 MHz upwards for more mobile broadband leaving the terrestrial television broadcasters with just 28 channels (channels 21 to 48). And at the moment, there is no guarantee that there won't be further erosion of the UHF television band for other uses.

There are also moves afoot to squash television broadcasting into even less spectrum to make way for more mobile broadband. At present the spectrum from 470 to 790 MHz is generally available (40 channels - channels 21 to 60). The new plans involve using the spectrum from 694 MHz upwards for more mobile broadband leaving the terrestrial television broadcasters with just 28 channels (channels 21 to 48). And at the moment, there is no guarantee that there won't be further erosion of the UHF television band for other uses.

If TV use is squashed into less spectrum, there will be less 'grey space' available for radiomicrophones, or for anyone else for that matter. To make matters worse, the tuning range of most radiomicrophones (and similar devices) is very limited and each time they are forced to change frequency, new equipment needs to be bought. Of course, this is good news for manufacturers such as Sennheiser and Shure, but is bad news for the end users.

The need for spectrum for radiomicrophones and other PMSE uses is recognised at an European level in the Radio Spectrum Policy Programme (RSPP) article 8.5 of which states:

So what can be done? Are PMSE users to be left as the nomads of the radio spectrum, packing down their camps, wandering across the desert and re-assembling their tents in a new area every 3-4 years? Or is there a long(er)-term solution that would allow them to lay solid foundations and put down some bricks?

For many years, a band at 1785 - 1800 MHz has been available for wireless microphone use, but only for digital microphones (see CEPT Report 50). Almost no use has been made of the band and the views of Audio-Technica illustrate why this is the case:

Using diversity reception (already commonplace in radiomicrophone equipment) and careful antenna placement, there is no reason why the 1.8 GHz band could not prove useful. But one of the other problems with this band is that radiomicrophones are not well suited to using digital technology. To send audio digitally, it must first be converted from analogue to digital. For 'high quality' audio, this would yield a 'raw' data rate of at least 512 kbps, if not more - and more like 1 Mbps by the time error correction is added in. If we were to try to transmit this data in the 200 kHz channels that microphones currently use, we would have to use a high-order modulation scheme (such as 8-PSK or 16 QAM) and this causes problems because:

Using diversity reception (already commonplace in radiomicrophone equipment) and careful antenna placement, there is no reason why the 1.8 GHz band could not prove useful. But one of the other problems with this band is that radiomicrophones are not well suited to using digital technology. To send audio digitally, it must first be converted from analogue to digital. For 'high quality' audio, this would yield a 'raw' data rate of at least 512 kbps, if not more - and more like 1 Mbps by the time error correction is added in. If we were to try to transmit this data in the 200 kHz channels that microphones currently use, we would have to use a high-order modulation scheme (such as 8-PSK or 16 QAM) and this causes problems because:

This means that the other way in which digital systems use spectrum efficiently is also a no-go. Compressing the data (e.g. using a compressed audio format such as mp3 instead of the raw digitised audio) also takes time - generally longer than the time taken to transmit the signal digitally. And so we reach an impasse: compressing the audio to use less spectrum takes too long, and transmitting the raw data uses more spectrum than their analogue counterpart and involves a number of other trade-offs. All this means that digital radiomicrophones, whilst slowly being developed, tend to offer no better performance than analogue versions (and at much higher cost).

But the fact is, that if the radiomicrophone industry does not make some strides towards adopting higher frequencies or more spectrum efficient modulation techniques, it might find itself without enough spectrum in which to operate.

So where could microphones go? There are a whole host of frequencies which are currently assigned at a European level by CEPT for radiomicrophone use (as per ERC Recommendation 70-03, Annex 10). These include:

There are also moves afoot to squash television broadcasting into even less spectrum to make way for more mobile broadband. At present the spectrum from 470 to 790 MHz is generally available (40 channels - channels 21 to 60). The new plans involve using the spectrum from 694 MHz upwards for more mobile broadband leaving the terrestrial television broadcasters with just 28 channels (channels 21 to 48). And at the moment, there is no guarantee that there won't be further erosion of the UHF television band for other uses.

There are also moves afoot to squash television broadcasting into even less spectrum to make way for more mobile broadband. At present the spectrum from 470 to 790 MHz is generally available (40 channels - channels 21 to 60). The new plans involve using the spectrum from 694 MHz upwards for more mobile broadband leaving the terrestrial television broadcasters with just 28 channels (channels 21 to 48). And at the moment, there is no guarantee that there won't be further erosion of the UHF television band for other uses.If TV use is squashed into less spectrum, there will be less 'grey space' available for radiomicrophones, or for anyone else for that matter. To make matters worse, the tuning range of most radiomicrophones (and similar devices) is very limited and each time they are forced to change frequency, new equipment needs to be bought. Of course, this is good news for manufacturers such as Sennheiser and Shure, but is bad news for the end users.

The need for spectrum for radiomicrophones and other PMSE uses is recognised at an European level in the Radio Spectrum Policy Programme (RSPP) article 8.5 of which states:

Member States shall, in cooperation with the Commission, seek to ensure the necessary frequency bands for PMSE, in accordance with the Union's objectives to improve the integration of internal market and access to culture.

So what can be done? Are PMSE users to be left as the nomads of the radio spectrum, packing down their camps, wandering across the desert and re-assembling their tents in a new area every 3-4 years? Or is there a long(er)-term solution that would allow them to lay solid foundations and put down some bricks?

For many years, a band at 1785 - 1800 MHz has been available for wireless microphone use, but only for digital microphones (see CEPT Report 50). Almost no use has been made of the band and the views of Audio-Technica illustrate why this is the case:

The frequency range [1800 MHz] is not really suited for wireless microphones, as the higher frequencies (i.e. shorter wavelengths) create more body absorption and shadow effects due to the directivity, etc. The use of these frequencies will only work adequately when there is a line of sight and a short distance between the transmitter and the receiver.

Using diversity reception (already commonplace in radiomicrophone equipment) and careful antenna placement, there is no reason why the 1.8 GHz band could not prove useful. But one of the other problems with this band is that radiomicrophones are not well suited to using digital technology. To send audio digitally, it must first be converted from analogue to digital. For 'high quality' audio, this would yield a 'raw' data rate of at least 512 kbps, if not more - and more like 1 Mbps by the time error correction is added in. If we were to try to transmit this data in the 200 kHz channels that microphones currently use, we would have to use a high-order modulation scheme (such as 8-PSK or 16 QAM) and this causes problems because:

Using diversity reception (already commonplace in radiomicrophone equipment) and careful antenna placement, there is no reason why the 1.8 GHz band could not prove useful. But one of the other problems with this band is that radiomicrophones are not well suited to using digital technology. To send audio digitally, it must first be converted from analogue to digital. For 'high quality' audio, this would yield a 'raw' data rate of at least 512 kbps, if not more - and more like 1 Mbps by the time error correction is added in. If we were to try to transmit this data in the 200 kHz channels that microphones currently use, we would have to use a high-order modulation scheme (such as 8-PSK or 16 QAM) and this causes problems because:- transmitters need to be linear meaning they draw more power and would drain batteries much more quickly;

- higher-order modulation schemes require decent signal-to-noise levels and thus higher powered transmitters;

- it takes time to encode and decode complex modulation schemes.

This means that the other way in which digital systems use spectrum efficiently is also a no-go. Compressing the data (e.g. using a compressed audio format such as mp3 instead of the raw digitised audio) also takes time - generally longer than the time taken to transmit the signal digitally. And so we reach an impasse: compressing the audio to use less spectrum takes too long, and transmitting the raw data uses more spectrum than their analogue counterpart and involves a number of other trade-offs. All this means that digital radiomicrophones, whilst slowly being developed, tend to offer no better performance than analogue versions (and at much higher cost).

But the fact is, that if the radiomicrophone industry does not make some strides towards adopting higher frequencies or more spectrum efficient modulation techniques, it might find itself without enough spectrum in which to operate.

So where could microphones go? There are a whole host of frequencies which are currently assigned at a European level by CEPT for radiomicrophone use (as per ERC Recommendation 70-03, Annex 10). These include:

- 29.7 - 47 MHz - manufacturers claim that these frequencies are not ideal as they are too noisy and antennas are too large (fussy lot aren't they)

- 174 - 216 MHz - VHF band III - mostly occupied by TV broadcasting and DAB radio

- 470 - 790 MHz - the aforementioned UHF band that is now being squeezed

- 863 - 865 MHz - licence exempt and shared with other devices

- 1785 - 1805 MHz - 'too high'

- 1215 - 1350 MHz - mostly an aeronautical radar band but shared with many other uses and therefore presumably sharable with others

- 1350 - 1400 MHz - low capacity fixed links and some mobile services

- 1492 - 1518 MHz - more low capacity fixed links - and already proposed in ERC 70-03 but available in a tiny amount in the UK only

- 1675 - 1710 MHz - a downlink band for meteorological satellites but not heavily used - sterilisation zones around official downlink sites would protect professional users

Wednesday 25 September, 2013, 11:35 - Radio Randomness, Spectrum Management, Equipment Reviews

Posted by Administrator

In the past, Wireless Waffle has discussed various things that cause radio interference but which are not supposed to including, for example Power Line Telecommunications devices. This time around it's the turn of a Class T audio amplifier to come under the spotlight.Posted by Administrator

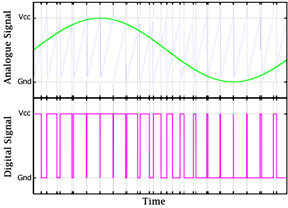

Class T amplifiers are really Class D amplifiers but are supposedly more efficient. Any clearer? No, probably not. The idea behind these types of audio amplifiers (noting that the Class D principal is also used in some radio transmitters too) is that instead of amplifying an analogue signal in an analogue way, such that the output voltage is just an amplified version of the input voltage, they switch the output voltage on and off at a frequency higher than the audio signal, and then use a filter on the output to smooth the square wave that they produce back into a nice analogue signal. This method is known as pulse width modulation.

This switching technique is exactly the same one that is used in the majority of modern power supplies (SMPS) and has the prime advantage that as the transistors that do the switching are either turned on or off, they are never in some intermediate state where they would have to act as a resistor and in doing so dissipate power and heat. So they are highly efficient and it is possible to generate audio with over 90% efficiency meaning that more of the power is converted to sound and less is lost as heat, which is, after all, a very admirable quality.

This switching technique is exactly the same one that is used in the majority of modern power supplies (SMPS) and has the prime advantage that as the transistors that do the switching are either turned on or off, they are never in some intermediate state where they would have to act as a resistor and in doing so dissipate power and heat. So they are highly efficient and it is possible to generate audio with over 90% efficiency meaning that more of the power is converted to sound and less is lost as heat, which is, after all, a very admirable quality.As with switch mode power supplies a good filter is critical in ensuring that none of the original square waves find their way to the output. Square waves are very good at producing harmonics and therefore are equally good at generating radio signals and, of course, radio interference. There have been many cases of switch mode power supplies causing such radio interference and their use in, for example, LED lighting, means that the number of possible sources of interference is ever increasing.

The main problem is that, in many cases, the device will work without the filter fitted - if (and only if) the device that it is powering is not too fussy about all those square waves (e.g. an LED) or has a method of smoothing them out itself (e.g. a loudspeaker). A loudspeaker is basically a large inductor, which is what the filters in switching amplifiers also comprise. Feeding the nasty square waves on the output of the switcher directly into a loudspeaker will not result in a noticeable loss in fidelity (assuming the switching frequency is well above the audible frequency range), nor any particular loss of efficiency. So why fit the filter? To stop radio interference, that's why.

So step up to the examination table, the Topping TP20-MK2 Class T Digital Mini Amplifier

In cases such as these, there is little that can be done. Other than taking the device apart and replacing the filter components with better ones (an idea that is not as daft as it sounds), the solution is to junk the device and use a traditional linear amplifier instead. Which is what has been done. Bye bye trendy, offendy Topping, hello dusty, trusty Sony.